|

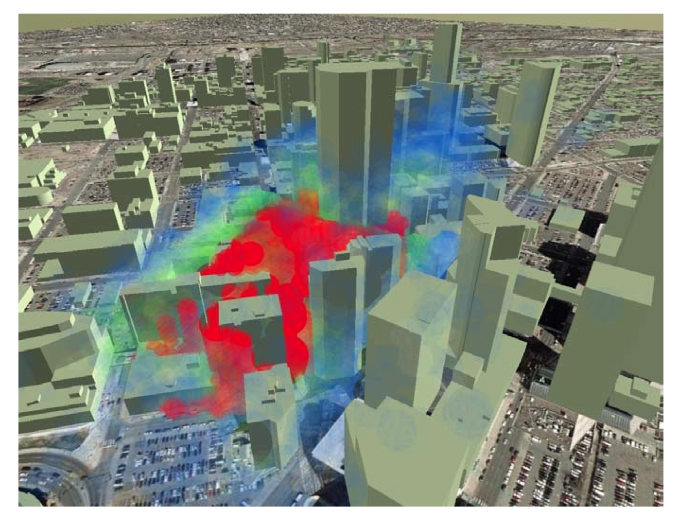

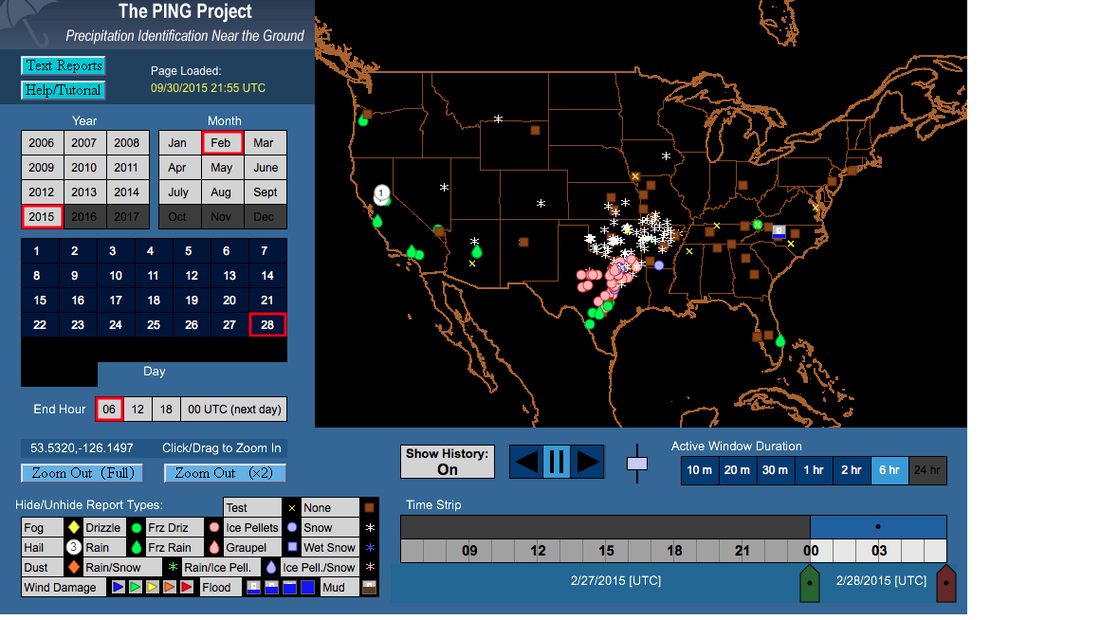

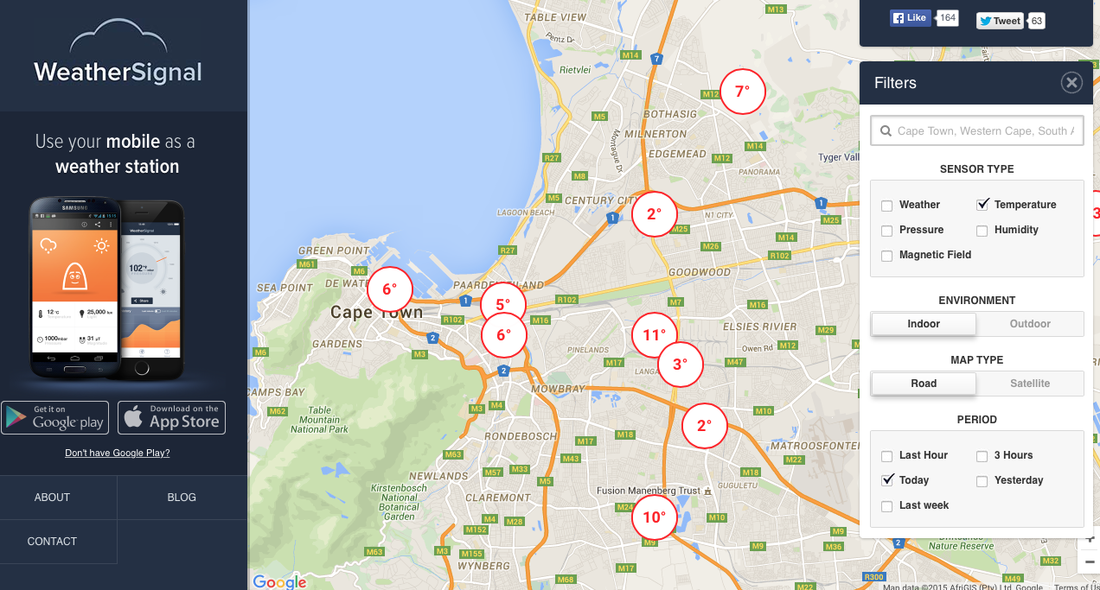

It's long been a yearning to be able to glance at a forecast and step out the door knowing that the weather won't suddenly 'turn' in the hours that you're gone. This applies to decisions large and small: Is there time to get to the top of the mountain before the thunderstorm strikes? Or to run to the store without an umbrella? How much snow will fall this morning, and so should there be a snow day? Is it safe to go out deep-sea fishing today? Looking at charts of improvement in forecasts over time might seem to suggest that real-time forecasts should be just about perfect. And indeed they are —— if what you care about is the general circulation of the Earth in the mid-troposphere. As for how that translates to conditions at a point down on the surface surrounded by the complex natural and manmade geography that characterizes the environment of the city-dweller, the picture is not quite so clear. There is a downward trend in forecast errors for tropical storm landfalls, for instance, but this figure and the last both show a decreasing rate of improvement —— bringing to mind (somewhat dishearteningly) Zeno's Progressive Dichotomy Paradox. On the other hand, the tidal wave of data enabled by the proliferation of Internet connectivity gives a lot of reason for optimism; the challenge, like with most other Internet-related problems, is extracting what's relevant from amidst the vast amounts of chatter. A Shanghai-area meteorological network has served as a major proof-of-concept in quick turnaround from mass data ingestion to nowcast output. Automated tracking of weather systems and efficient ensemble (multi-model) and statistical (multi-event) approaches for pinning down rapidly evolving features like thunderstorms have proven their worth at major events like the Olympics and World's Fair where it's cost-effective to bring in the experts, apply the high-resolution models, and deploy the necessary equipment. It all goes back to basic principles like advection and latent-heat release, but the sophistication of the approaches results in forecasts far more accurate than what could be done by extrapolating out a few hours with pencil and paper. It will be very interesting to see how machine learning can further reclaim the 'forecast-able' from the 'inherent chaos'. Smartphones are of course the prototype of large-scale data-gathering, given their basic sensors that various apps have exploited to plot dense real-time street-level temperatures, humidity, and sometimes other variables for just about any city in the world (as in the second figure below). A glance reveals that data quality is a significant challenge -- long-running debates on climate change, for instance, hinge on errors that are no more than 1 deg C. And, somewhat counterintuitively, such errors can be more important in nowcasts (~hours) than in forecasts (~days or weeks) where the initial conditions matter less. The same principle applies to coming-years versus century-out projections as shown in this classic figure (where internal variability effectively represents initial conditions, and two possible realities are compared). On the other hand, the first figure below is from a webpage where users submit their observations of current precipitation type at their location, much like CoCoRaHS but qualitative and in real-time. This is presumably more accurate than what any cheap sensor could detect; the tradeoff is that the quantity and thus density of observations is orders of magnitude lower. Whether either of these is preferable to the other, or to the medium quality and density of say Weather Underground's network of private traditional weather stations, probably depends mostly on what question is being asked. In terms of other automated observations, automobile-sensor networks that actively transmit meteorological data to be shared and analyzed with a larger purpose than decorating your dashboard with a temperature reading have been technologically feasible for a while, but so far sensors have mainly just reported on traffic conditions; the probable first widespread weather application will be in fog alerts. Fog is ephemeral and mainly a hazard only for drivers. A patent evidently purchased by Google reveals that automated ice detection on roads has been considered since at least 1978, but as of 2015 it's still best classified as a 'vision'. As an aside, one big question to come of all of this is the importance of data vs. intuition -- a recurring theme in this field. Psychologists are ambivalent about whether more information helps people solve problems; on the other hand, from personal experience, I can say that to be able to beat algorithms in daily weather forecasting people need to have the algorithms' results first -- but if they do, they can triumph reliably, although only by tacitly accepting the algorithms' general interpretations. Many consulting services have emerged catering to events with short-term forecasting needs arguably best served by a blend of advanced algorithms and expert judgment, often supplemented with special high-quality measurement sites. Will 'experienced' algorithms mature in their decision-making like experienced people? Or will they struggle to separate wheat from chaff? If real-time weather decisions are anything like real-time driving decisions, then perhaps it will prove to be a balancing act.  Simulation of a plume in downtown Denver five minutes after release, with color proportional to concentration. Winds are measured by lidar on the city scale, interpolated to the building level with one set of models, and filled in between buildings with another. Source: Warner and Kosovic 2010, "Fine-Scale Atmospheric Modeling at NCAR." In the end, however, algorithms and machine-learning techniques literally are what they eat, in that they are only as good as the data they are fed —— and though lots of data is now out there due to the sensors in our pockets, not nearly enough of it is cleaned to make it of sufficient quality to use in operational nowcasts. Air pollution and wind modelers have seen very good results in simulations using urban 'schemes' (physics and chemistry packages), which can get down to a less-than-10-m scale, attached to standard high-resolution models like WRF (see figure above). However, they require commensurately high-resolution building, vegetation, anthropogenic-heat, soil-moisture, etc information, and also often special tools like lidar for verification. Good in small spatial windows, not so good in small temporal ones. Consequently, in the best operational nowcasts, put out by the Weather Prediction Center, about 1/3 of the area gets the predicted amount of rain (here, 0.25"-0.50") —— so you can expect to keep getting caught in the rain, or lugging your umbrella around in the sunshine, for many years to come.

0 Comments

Leave a Reply. |

Archives

September 2023

Categories |

RSS Feed

RSS Feed